- Команда Wget в Linux с примерами

- Wget Command in Linux with Examples

- В этом руководстве мы покажем вам, как использовать команду Wget, на практических примерах и подробных объяснениях наиболее распространенных параметров Wget.

- Установка Wget

- Установка Wget на Ubuntu и Debian

- Установка Wget на CentOS и Fedora

- Синтаксис команды Wget

- Как скачать файл с помощью Wget

- Использование команды Wget для сохранения загруженного файла под другим именем

- Использование команды Wget для загрузки файла в определенный каталог

- Как ограничить скорость загрузки с помощью Wget

- Как возобновить загрузку с помощью Wget

- Как скачать в фоновом режиме с Wget

- Как изменить Wget User-Agent от Wget

- Как скачать несколько файлов с помощью Wget

- Использование команды Wget для загрузки через FTP

- Использование команды Wget для создания зеркала сайта

- Как пропустить проверку сертификата с помощью Wget

- Как скачать в стандартный вывод с помощью Wget

- Вывод

- 10 Wget (Linux File Downloader) Command Examples in Linux

- 1. Single file download

- 2. Download file with different name

- 3. Download multiple file with http and ftp protocol

- 4. Read URL’s from a file

- 5. Resume uncompleted download

- 6. Download file with appended .1 in file name

- 7. Download files in background

- 8. Restrict download speed limits

- 9. Restricted FTP and HTTP downloads with username and password

- 10. Find wget version and help

- If You Appreciate What We Do Here On TecMint, You Should Consider:

- Linux wget command

- Description

- Overview

- Installing wget

- Syntax

- Basic startup options

- Logging and input file options

- Download options

- Directory options

- HTTP options

- HTTPS (SSL/TLS) options

- FTP options

- Recursive retrieval options

- Recursive accept/reject options

- Files

- Examples

- Related commands

Команда Wget в Linux с примерами

Wget Command in Linux with Examples

В этом руководстве мы покажем вам, как использовать команду Wget, на практических примерах и подробных объяснениях наиболее распространенных параметров Wget.

Установка Wget

Пакет wget уже предустановлен в большинстве дистрибутивов Linux.

Чтобы проверить, установлен ли пакет Wget в вашей системе, откройте консоль, введите wget и нажмите клавишу ввода. Если у вас установлен wget, система напечатает wget: missing URL , в противном случае он будет печатать wget command not found .

Если wget не установлен, вы можете легко установить его с помощью менеджера пакетов вашего дистрибутива.

Установка Wget на Ubuntu и Debian

Установка Wget на CentOS и Fedora

Синтаксис команды Wget

Прежде чем перейти к использованию wget команды, давайте начнем с обзора основного синтаксиса.

В wget полезности выражение принимает следующий вид:

- options — варианты Wget

- url — URL файла или каталога, который вы хотите скачать или синхронизировать.

Как скачать файл с помощью Wget

В простейшей форме, когда используется без какой-либо опции, wget загрузит ресурс, указанный в [url], в текущий каталог.

В следующем примере мы загружаем tar-архив ядра Linux:

Как видно из рисунка выше, Wget начинает с разрешения IP-адреса домена, затем подключается к удаленному серверу и начинает передачу.

Во время загрузки Wget показывает индикатор выполнения наряду с именем файла, размером файла, скоростью загрузки и предполагаемым временем завершения загрузки. После завершения загрузки вы можете найти загруженный файл в текущем рабочем каталоге .

Чтобы отключить вывод Wget, используйте -q опцию.

Если файл уже существует, Wget добавит .N (число) в конце имени файла.

Использование команды Wget для сохранения загруженного файла под другим именем

Чтобы сохранить загруженный файл под другим именем, передайте -O опцию, а затем выбранное имя:

Команда выше сохранит последнюю файл hugo zip из GitHub latest-hugo.zip вместо его исходного имени.

Использование команды Wget для загрузки файла в определенный каталог

По умолчанию Wget сохраняет загруженный файл в текущем рабочем каталоге. Чтобы сохранить файл в определенном месте, используйте -P параметр:

С помощью приведенной выше команды мы сообщаем Wget сохранить ISO-файл CentOS 7 в /mnt/iso каталог.

Как ограничить скорость загрузки с помощью Wget

Чтобы ограничить скорость загрузки, используйте —limit-rate опцию. По умолчанию скорость измеряется в байтах / секунду. Добавить k за килобайт, m за мегабайты и g за гигабайты.

Следующая команда загрузит двоичный файл Go и ограничит скорость загрузки до 1 Мб:

Эта опция полезна, когда вы не хотите, чтобы wget использовал всю доступную пропускную способность.

Как возобновить загрузку с помощью Wget

Вы можете возобновить загрузку, используя -c опцию. Это полезно, если ваше соединение разрывается во время загрузки большого файла, и вместо того, чтобы начать загрузку с нуля, вы можете продолжить предыдущую.

В следующем примере мы возобновляем загрузку iso-файла Ubuntu 18.04:

Если удаленный сервер не поддерживает возобновление загрузки, Wget начнет загрузку с начала и перезапишет существующий файл.

Как скачать в фоновом режиме с Wget

Для загрузки в фоновом режиме используйте -b опцию. В следующем примере мы загружаем iso-файл OpenSuse в фоновом режиме:

По умолчанию выходные данные перенаправляются в wget-log файл в текущем каталоге. Чтобы посмотреть статус загрузки, используйте tail команду:

Как изменить Wget User-Agent от Wget

Иногда при загрузке файла удаленный сервер может быть настроен на блокировку Wget User-Agent. В подобных ситуациях для эмуляции другого браузера передайте -U опцию.

Приведенная выше команда будет эмулировать Firefox 60, запрашивающий страницу у wget-forbidden.com

Как скачать несколько файлов с помощью Wget

Если вы хотите загрузить несколько файлов одновременно, используйте -i параметр, после которого укажите путь к локальному или внешнему файлу, содержащему список URL-адресов для загрузки. Каждый URL должен быть в отдельной строке.

В следующем примере мы загружаем iso файлы Arch Linux, Debian и Fedora с URL-адресами, указанными в linux-distros.txt файле:

Если вы укажете — имя файла, URL будут считаны из стандартного ввода.

Использование команды Wget для загрузки через FTP

Чтобы загрузить файл с FTP-сервера, защищенного паролем, укажите имя пользователя и пароль, как показано ниже:

Использование команды Wget для создания зеркала сайта

Чтобы создать зеркало сайта с помощью Wget, используйте -m опцию. Это создаст полную локальную копию веб-сайта, перейдя и загрузив все внутренние ссылки, а также ресурсы веб-сайта (JavaScript, CSS, изображения).

Если вы хотите использовать загруженный веб-сайт для локального просмотра, вам нужно будет передать несколько дополнительных аргументов команде выше.

Эта -k опция заставит Wget конвертировать ссылки в загруженных документах, чтобы сделать их пригодными для локального просмотра. -p Опция покажет Wget , чтобы загрузить все необходимые файлы для отображения страницы HTML.

Как пропустить проверку сертификата с помощью Wget

Если вы хотите загрузить файл через HTTPS с хоста, имеющего недействительный сертификат SSL, используйте —no-check-certificate параметр:

Как скачать в стандартный вывод с помощью Wget

В следующем примере Wget тихо (пометит -q ) загрузит и выведет последнюю версию WordPress в stdout (пометит -O — ) и tar передаст ее утилите, которая извлечет архив в /var/www каталог.

Вывод

С помощью Wget вы можете загружать несколько файлов, возобновлять частичную загрузку, зеркалировать веб-сайты и комбинировать параметры Wget в соответствии с вашими потребностями.

Источник

10 Wget (Linux File Downloader) Command Examples in Linux

In this post we are going to review wget utility which retrieves files from World Wide Web (WWW) using widely used protocols like HTTP, HTTPS and FTP. Wget utility is freely available package and license is under GNU GPL License. This utility can be install any Unix-like Operating system including Windows and MAC OS. It’s a non-interactive command line tool. Main feature of Wget of it’s robustness. It’s designed in such way so that it works in slow or unstable network connections. Wget automatically start download where it was left off in case of network problem. Also downloads file recursively. It’ll keep trying until file has be retrieved completely.

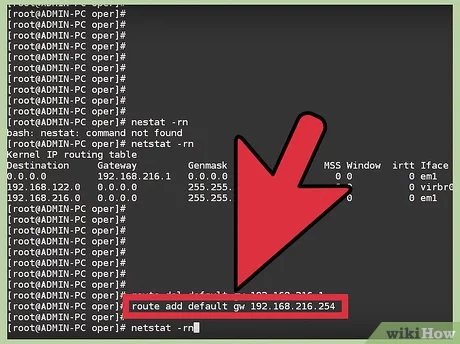

First, check whether wget utility is already installed or not in your Linux box, using following command.

Please install it using YUM command in case wget is not installed already or you can also download binary package at http://ftp.gnu.org/gnu/wget/.

The -y option used here, is to prevent confirmation prompt before installing any package. For more YUM command examples and options read the article on 20 YUM Command Examples for Linux Package Management.

1. Single file download

The command will download single file and stores in a current directory. It also shows download progress, size, date and time while downloading.

2. Download file with different name

Using -O (uppercase) option, downloads file with different file name. Here we have given wget.zip file name as show below.

3. Download multiple file with http and ftp protocol

Here we see how to download multiple files using HTTP and FTP protocol with wget command at ones.

4. Read URL’s from a file

You can store number of URL’s in text file and download them with -i option. Below we have created tmp.txt under wget directory where we put series of URL’s to download.

5. Resume uncompleted download

In case of big file download, it may happen sometime to stop download in that case we can resume download the same file where it was left off with -c option. But when you start download file without specifying -c option wget will add .1 extension at the end of file, considering as a fresh download. So, it’s good practice to add -c switch when you download big files.

6. Download file with appended .1 in file name

When you start download without -c option wget add .1 at the end of file and start with fresh download. If .1 already exist .2 append at the end of file.

See the example files with .1 extension appended at the end of the file.

7. Download files in background

With -b option you can send download in background immediately after download start and logs are written in /wget/log.txt file.

8. Restrict download speed limits

With Option –limit-rate=100k, the download speed limit is restricted to 100k and the logs will be created under /wget/log.txt as shown below.

9. Restricted FTP and HTTP downloads with username and password

With Options –http-user=username, –http-password=password & –ftp-user=username, –ftp-password=password, you can download password restricted HTTP or FTP sites as shown below.

10. Find wget version and help

With Options –version and –help you can view version and help as needed.

In this article we have covered Linux wget command with options for daily administrative task. Do man wget if you wan to know more about it. Kindly share through our comment box or if we’ve missed out anything, do let us know.

If You Appreciate What We Do Here On TecMint, You Should Consider:

TecMint is the fastest growing and most trusted community site for any kind of Linux Articles, Guides and Books on the web. Millions of people visit TecMint! to search or browse the thousands of published articles available FREELY to all.

If you like what you are reading, please consider buying us a coffee ( or 2 ) as a token of appreciation.

We are thankful for your never ending support.

Источник

Linux wget command

On Unix-like operating systems, the wget command downloads files served with HTTP, HTTPS, or FTP over a network.

Description

wget is a free utility for non-interactive download of files from the web. It supports HTTP, HTTPS, and FTP protocols, as well as retrieval through HTTP proxies.

wget is non-interactive, meaning that it can work in the background, while the user is not logged on, which allows you to start a retrieval and disconnect from the system, letting wget finish the work. By contrast, most web browsers require constant user interaction, which make transferring a lot of data difficult.

wget can follow links in HTML and XHTML pages and create local versions of remote websites, fully recreating the directory structure of the original site, which is sometimes called «recursive downloading.» While doing that, wget respects the Robot Exclusion Standard (robots.txt). wget can be instructed to convert the links in downloaded HTML files to the local files for offline viewing.

wget has been designed for robustness over slow or unstable network connections; if a download fails due to a network problem, it will keep retrying until the whole file has been retrieved. If the server supports regetting, it will instruct the server to continue the download from where it left off.

Overview

The simplest way to use wget is to provide it with the location of a file to download over HTTP. For example, to download the file http://website.com/files/file.zip, this command:

. would download the file into the working directory.

There are many options that allow you to use wget in different ways, for different purposes. These are outlined below.

Installing wget

If your operating system is Ubuntu, or another Debian-based Linux distribution which uses APT for package management, you can install wget with apt-get:

For other operating systems, see your package manager’s documentation for information about how to locate the wget binary package and install it. Or, you can install it from source from the GNU website at https://www.gnu.org/software/wget/.

Syntax

Basic startup options

| -V, —version | Display the version of wget, and exit. |

| -h, —help | Print a help message describing all of wget‘s command-line options, and exit. |

| -b, —background | Go to background immediately after startup. If no output file is specified via the -o, output is redirected to wget-log. |

| -e command, —execute command | Execute command as if it were a part of the file .wgetrc. A command thus invoked will be executed after the commands in .wgetrc, thus taking precedence over them. |

Logging and input file options

| -o logfile, —output-file=logfile | Log all messages to logfile. The messages are normally reported to standard error. |

| -a logfile, —append-output=logfile | Append to logfile. This option is the same as -o, only it appends to logfile instead of overwriting the old log file. If logfile does not exist, a new file is created. |

| -d, —debug | Turn on debug output, meaning various information important to the developers of wget if it does not work properly. Your system administrator may have chosen to compile wget without debug support, in which case -d will not work. |

Note that compiling with debug support is always safe; wget compiled with the debug support will not print any debug info unless requested with -d.

If this function is used, no URLs need be present on the command line. If there are URLs both on the command line and input file, those on the command lines will be the first ones to be retrieved. If —force-html is not specified, then file should consist of a series of URLs, one per line.

However, if you specify —force-html, the document will be regarded as HTML. In that case you may have problems with relative links, which you can solve either by adding to the documents or by specifying —base=url on the command line.

If the file is an external one, the document will be automatically treated as HTML if the Content-Type is «text/html«. Furthermore, the file’s location will be implicitly used as base href if none was specified.

—base=URL

For instance, if you specify http://foo/bar/a.html for URL, and wget reads ../baz/b.html from the input file, it would be resolved to http://foo/baz/b.html.

Download options

| —bind-address=ADDRESS | When making client TCP/IP connections, bind to ADDRESS on the local machine. ADDRESS may be specified as a hostname or IP address. This option can be useful if your machine is bound to multiple IPs. |

| -t number, —tries=number | Set number of retries to number. Specify 0 or inf for infinite retrying. The default is to retry 20 times, with the exception of fatal errors like «connection refused» or «not found» (404), which are not retried. |

| -O file, —output-document=file | The documents will not be written to the appropriate files, but all will be concatenated together and written to file. |

If «—» is used as file, documents will be printed to standard output, disabling link conversion. (Use «./-» to print to a file literally named «—«.)

Use of -O is not intended to mean «use the name file instead of the one in the URL;» rather, it is analogous to shell redirection: wget -O file http://foo is intended to work like wget -O — http://foo > file; file will be truncated immediately, and all downloaded content will be written there.

For this reason, -N (for timestamp-checking) is not supported in combination with -O: since file is always newly created, it will always have a very new timestamp. A warning will be issued if this combination is used.

Similarly, using -r or -p with -O may not work as you expect: wget won’t just download the first file to file and then download the rest to their normal names: all downloaded content will be placed in file. This was disabled in version 1.11, but has been reinstated (with a warning) in 1.11.2, as there are some cases where this behavior can actually have some use.

Note that a combination with -k is only permitted when downloading a single document, as in that case it will just convert all relative URIs to external ones; -k makes no sense for multiple URIs when they’re all being downloaded to a single file; -k can be used only when the output is a regular file.

When running wget without -N, -nc, or -r, downloading the same file in the same directory will result in the original copy of file being preserved and the second copy being named file.1. If that file is downloaded yet again, the third copy will be named file.2, and so on. When -nc is specified, this behavior is suppressed, and wget will refuse to download newer copies of file. Therefore, «no-clobber» is a misnomer in this mode: it’s not clobbering that’s prevented (as the numeric suffixes were already preventing clobbering), but rather the multiple version saving that’s being turned off.

When running wget with -r, but without -N or -nc, re-downloading a file will result in the new copy overwriting the old. Adding -nc will prevent this behavior, instead causing the original version to be preserved and any newer copies on the server to be ignored.

When running wget with -N, with or without -r, the decision as to whether or not to download a newer copy of a file depends on the local and remote timestamp and size of the file. -nc may not be specified at the same time as -N.

Note that when -nc is specified, files with the suffixes .html or .htm will be loaded from the local disk and parsed as if they had been retrieved from the web.

If there is a file named ls-lR.Z in the current directory, wget will assume that it is the first portion of the remote file, and will ask the server to continue the retrieval from an offset equal to the length of the local file.

Note that you don’t need to specify this option if you just want the current invocation of wget to retry downloading a file should the connection be lost midway through, which is the default behavior. -c only affects resumption of downloads started prior to this invocation of wget, and whose local files are still sitting around.

Without -c, the previous example would just download the remote file to ls-lR.Z.1, leaving the truncated ls-lR.Z file alone.

Beginning with wget 1.7, if you use -c on a non-empty file, and it turns out that the server does not support continued downloading, wget will refuse to start the download from scratch, which would effectively ruin existing contents. If you really want the download to start from scratch, remove the file.

Also, beginning with wget 1.7, if you use -c on a file that is of equal size as the one on the server, wget will refuse to download the file and print an explanatory message. The same happens when the file is smaller on the server than locally (presumably because it was changed on the server since your last download attempt), because «continuing» is not meaningful, no download occurs.

On the other hand, while using -c, any file that’s bigger on the server than locally will be considered an incomplete download and only (length(remote) — length(local)) bytes will be downloaded and tacked onto the end of the local file. This behavior can be desirable in certain cases: for instance, you can use wget -c to download just the new portion that’s been appended to a data collection or log file.

However, if the file is bigger on the server because it’s been changed, as opposed to just appended to, you’ll end up with a garbled file. wget has no way of verifying that the local file is really a valid prefix of the remote file. You need to be especially careful of this when using -c in conjunction with -r, since every file will be considered as an «incomplete download» candidate.

Another instance where you’ll get a garbled file if you try to use -c is if you have a lame HTTP proxy that inserts a «transfer interrupted» string into the local file. In the future a «rollback» option may be added to deal with this case.

Note that -c only works with FTP servers and with HTTP servers that support the «Range» header.

The «bar» indicator is used by default. It draws an ASCII progress bar graphics (a.k.a «thermometer» display) indicating the status of retrieval. If the output is not a TTY, the «dot» bar will be used by default.

Use —progress=dot to switch to the «dot» display. It traces the retrieval by printing dots on the screen, each dot representing a fixed amount of downloaded data.

When using the dotted retrieval, you may also set the style by specifying the type as dot:style. Different styles assign different meaning to one dot. With the «default» style each dot represents 1 K, there are ten dots in a cluster and 50 dots in a line. The «binary» style has a more «computer»-like orientation: 8 K dots, 16-dots clusters and 48 dots per line (which makes for 384 K lines). The «mega» style is suitable for downloading very large files; each dot represents 6 4K retrieved, there are eight dots in a cluster, and 48 dots on each line (so each line contains 3 M).

Note that you can set the default style using the progress command in .wgetrc. That setting may be overridden from the command line. The exception is that, when the output is not a TTY, the «dot» progress will be favored over «bar«. To force the bar output, use —progress=bar:force.

By default, when a file is downloaded, its timestamps are set to match those from the remote file, which allows the use of —timestamping on subsequent invocations of wget. However, it is sometimes useful to base the local file’s timestamp on when it was actually downloaded; for that purpose, the —no-use-server-timestamps option has been provided.

This feature needs much more work for wget to get close to the functionality of real web spiders.

When interacting with the network, wget can check for timeout and abort the operation if it takes too long. This prevents anomalies like hanging reads and infinite connects. The only timeout enabled by default is a 900-second read timeout. Setting a timeout to 0 disables it altogether. Unless you know what you are doing, it is best not to change the default timeout settings.

All timeout-related options accept decimal values, as well as subsecond values. For example, 0.1 seconds is a legal (though unwise) choice of timeout. Subsecond timeouts are useful for checking server response times or for testing network latency.

This option allows the use of decimal numbers, usually in conjunction with power suffixes; for example, —limit-rate=2.5k is a legal value.

Note that wget implements the limiting by sleeping the appropriate amount of time after a network read that took less time than specified by the rate. Eventually this strategy causes the TCP transfer to slow down to approximately the specified rate. However, it may take some time for this balance to be achieved, so don’t be surprised if limiting the rate doesn’t work well with very small files.

Specifying a large value for this option is useful if the network or the destination host is down, so that wget can wait long enough to reasonably expect the network error to be fixed before the retry. The waiting interval specified by this function is influenced by —random-wait (see below).

By default, wget will assume a value of 10 seconds.

Note that quota will never affect downloading a single file. So if you specify wget -Q10k ftp://wuarchive.wustl.edu/ls-lR.gz, all of the ls-lR.gz will be downloaded. The same goes even when several URLs are specified on the command-line. However, quota is respected when retrieving either recursively, or from an input file. Thus you may safely type wget -Q2m -i sites; download will be aborted when the quota is exceeded.

Setting quota to 0 or to inf unlimits the download quota.

However, it has been reported that in some situations it is not desirable to cache hostnames, even for the duration of a short-running application like wget. With this option wget issues a new DNS lookup (more precisely, a new call to «gethostbyname» or «getaddrinfo«) each time it makes a new connection. Please note that this option will not affect caching that might be performed by the resolving library or by an external caching layer, such as NSCD.

By default, wget escapes the characters that are not valid as part of file names on your operating system, as well as control characters that are typically unprintable. This option is useful for changing these defaults, either because you are downloading to a non-native partition, or because you want to disable escaping of the control characters.

The modes are a comma-separated set of text values. The acceptable values are unix, windows, nocontrol, ascii, lowercase, and uppercase. The values unix and windows are mutually exclusive (one will override the other), as are lowercase and uppercase. Those last are special cases, as they do not change the set of characters that would be escaped, but rather force local file paths to be converted either to lower or uppercase.

When mode is set to unix, wget escapes the character / and the control characters in the ranges 0—31 and 128—159. This option is the default on Unix-like OSes.

When mode is set to windows, wget escapes the characters \, |, /, :, ?, «, *, , and the control characters in the ranges 0—31 and 128—159. In addition to this, wget in Windows mode uses + instead of : to separate host and port in local file names, and uses @ instead of & to separate the query portion of the file name from the rest. Therefore, an URL that would be saved as www.xemacs.org:4300/search.pl?input=blah in Unix mode would be saved as www.xemacs.org+4300/[email protected]=blah in Windows mode. This mode is the default on Windows.

If you specify nocontrol, then the escaping of the control characters is also switched off. This option may make sense when you are downloading URLs whose names contain UTF-8 characters, on a system which can save and display filen ames in UTF-8 (some possible byte values used in UTF-8 byte sequences fall in the range of values designated by wget as «controls»).

The ascii mode is used to specify that any bytes whose values are outside the range of ASCII characters (that is, greater than 127) shall be escaped. This mode can be useful when saving file names whose encoding does not match the one used locally.

Neither options should be needed normally. By default, an IPv6-aware wget will use the address family specified by the host’s DNS record. If the DNS responds with both IPv4 and IPv6 addresses, wget will try them in sequence until it finds one it can connect to. (Also, see «—prefer-family» option described below.)

These options can be used to deliberately force the use of IPv4 or IPv6 address families on dual family systems, usually to aid debugging or to deal with broken network configuration. Only one of —inet6-only and —inet4-only may be specified at the same time. Neither option is available in wget compiled without IPv6 support.

This avoids spurious errors and connect attempts when accessing hosts that resolve to both IPv6 and IPv4 addresses from IPv4 networks. For example, www.kame.net resolves to 2001:200:0:8002:203:47ff:fea5:3085 and to 203.178.141.194. When the preferred family is «IPv4«, the IPv4 address is used first; when the preferred family is «IPv6«, the IPv6 address is used first; if the specified value is «none«, the address order returned by DNS is used without change.

Unlike -4 and -6, this option doesn’t inhibit access to any address family, it only changes the order in which the addresses are accessed. Also, note that the reordering performed by this option is stable; it doesn’t affect order of addresses of the same family. That is, the relative order of all IPv4 addresses and of all IPv6 addresses remains intact in all cases.

—password=password

You can set the default state of IRI support using the «iri» command in .wgetrc. That setting may be overridden from the command line.

wget use the function «nl_langinfo()» and then the «CHARSET» environment variable to get the locale. If it fails, ASCII is used.

You can set the default local encoding using the «local_encoding» command in .wgetrc. That setting may be overridden from the command line.

For HTTP, remote encoding can be found in HTTP «Content-Type» header and in HTML «Content-Type http-equiv» meta tag.

You can set the default encoding using the «remoteencoding» command in .wgetrc. That setting may be overridden from the command line.

Directory options

| -nd, —no-directories | Do not create a hierarchy of directories when retrieving recursively. With this option turned on, all files will get saved to the current directory, without clobbering (if a name shows up more than once, the file names will get extensions .n). |

| -x, —force-directories | The opposite of -nd; create a hierarchy of directories, even if one would not have been created otherwise. For example, wget -x http://fly.srk.fer.hr/robots.txt will save the downloaded file to fly.srk.fer.hr/robots.txt. |

| -nH, —no-host-directories | Disable generation of host-prefixed directories. By default, invoking wget with -r http://fly.srk.fer.hr/ will create a structure of directories beginning with fly.srk.fer.hr/. This option disables such behavior. |

| —protocol-directories | Use the protocol name as a directory component of local file names. For example, with this option, wget -r http://host will save to http/host/. rather than just to host/. |

| —cut-dirs=number | Ignore number directory components. This option is useful for getting a fine-grained control over the directory where recursive retrieval will be saved. |

Take, for example, the directory at ftp://ftp.xemacs.org/pub/xemacs/. If you retrieve it with -r, it will be saved locally under ftp.xemacs.org/pub/xemacs/. While the -nH option can remove the ftp.xemacs.org/ part, you are still stuck with pub/xemacs, which is where —cut-dirs comes in handy; it makes wget not «see» number remote directory components. Here are several examples of how —cut-dirs option works:

| (no options) | ftp.xemacs.org/pub/xemacs/ |

| -nH | pub/xemacs/ |

| -nH —cut-dirs=1 | xemacs/ |

| -nH —cut-dirs=2 | . |

| —cut-dirs=1 | ftp.xemacs.org/xemacs/ |

If you just want to get rid of the directory structure, this option is similar to a combination of -nd and -P. However, unlike -nd, —cut-dirs does not lose with subdirectories; for instance, with -nH —cut-dirs=1, a beta/ subdirectory will be placed to xemacs/beta, as one would expect.

—directory-prefix=prefix

HTTP options

| -E, —html-extension | If a file of type application/xhtml+xml or text/html is downloaded and the URL does not end with the regexp «\.[Hh][Tt][Mm][Ll]?«, this option will cause the suffix .html to be appended to the local file name. This option is useful, for instance, when you’re mirroring a remote site that uses .asp pages, but you want the mirrored pages to be viewable on your stock Apache server. Another good use for this is when you’re downloading CGI-generated materials. An URL like http://site.com/article.cgi?25 will be saved as article.cgi?25.html. |

Note that file names changed in this way will be re-downloaded every time you re-mirror a site, because wget can’t tell that the local X.html file corresponds to remote URL X (since it doesn’t yet know that the URL produces output of type text/html or application/xhtml+xml).

As of version 1.12, wget will also ensure that any downloaded files of type text/css end in the suffix .css, and the option was renamed from —html-extension, to better reflect its new behavior. The old option name is still acceptable, but should now be considered deprecated.

At some point in the future, this option may well be expanded to include suffixes for other types of content, including content types that are not parsed by wget.

—http-passwd=password

Another way to specify username and password is in the URL itself. Either method reveals your password to anyone who bothers to run ps. To prevent the passwords from being seen, store them in .wgetrc or .netrc, and make sure to protect those files from other users with chmod. If the passwords are important, do not leave them lying in those files either; edit the files and delete them after wget has started the download.

Caching is allowed by default.

You will typically use this option when mirroring sites that require that you be logged in to access some or all of their content. The login process typically works by the web server issuing an HTTP cookie upon receiving and verifying your credentials. The cookie is then resent by the browser when accessing that part of the site, and so proves your identity.

Mirroring such a site requires wget to send the same cookies your browser sends when communicating with the site. To do this use —load-cookies; point wget to the location of the cookies.txt file, and it will send the same cookies your browser would send in the same situation. Different browsers keep text cookie files in different locations:

| Netscape 4.x | The cookies are in /.netscape/cookies.txt. |

| Mozilla and Netscape 6.x | Mozilla’s cookie file is also named cookies.txt, located somewhere under /.mozilla, in the directory of your profile. The full path usually ends up looking somewhat like /.mozilla/default/some-weird-string/cookies.txt. |

| Internet Explorer | You can produce a cookie file that wget can utilize using the File menu, Import and Export, Export Cookies. Tested with Internet Explorer 5 (wow. that’s old), but it is not guaranteed to work with earlier versions. |

| other browsers | If you are using a different browser to create your cookies, —load-cookies only works if you can locate or produce a cookie file in the Netscape format that wget expects. |

If you cannot use —load-cookies, there might still be an alternative. If your browser supports a «cookie manager», you can use it to view the cookies used when accessing the site you’re mirroring. Write down the name and value of the cookie, and manually instruct wget to send those cookies, bypassing the «official» cookie support:

Since the cookie file format does not normally carry session cookies, wget marks them with an expiry timestamp of 0. wget‘s —load-cookies recognizes those as session cookies, but it might confuse other browsers. Also, note that cookies so loaded will be treated as other session cookies, which means that if you want —save-cookies to preserve them again, you must use —keep-session-cookies again.

With this option, wget ignores the «Content-Length» header, as if it never existed.

You may define more than one additional header by specifying —header more than once.

Specification of an empty string as the header value will clear all previous user-defined headers.

As of wget 1.10, this option can be used to override headers otherwise generated automatically. This example instructs wget to connect to localhost, but to specify foo.bar in the «Host» header:

In versions of wget prior to 1.10 such use of —header caused sending of duplicate headers.

—proxy-password=password

Security considerations similar to those with —http-password pertain here as well.

—user-agent=agent-string

The HTTP protocol allows the clients to identify themselves using a «User-Agent» header field. This enables distinguishing the WWW software, usually for statistical purposes or for tracing of protocol violations. wget normally identifies as «Wget/version«, version being the current version number of wget.

However, some sites have been known to impose the policy of tailoring the output according to the «User-Agent«-supplied information. While this is not such a bad idea in theory, it has been abused by servers denying information to clients other than (historically) Netscape or, more frequently, Microsoft Internet Explorer. This option allows you to change the «User-Agent» line issued by wget. Use of this option is discouraged, unless you really know what you are doing.

Specifying empty user agent with —user-agent=»» instructs wget not to send the «User-Agent» header in HTTP requests.

—post-file=file

Please be aware that wget needs to know the size of the POST data in advance. Therefore the argument to «—post-file» must be a regular file; specifying a FIFO or something like /dev/stdin won’t work. It’s not quite clear how to work around this limitation inherent in HTTP/1.0. Although HTTP/1.1 introduces chunked transfer that doesn’t require knowing the request length in advance, a client can’t use chunked unless it knows it’s talking to an HTTP/1.1 server. And it can’t know that until it receives a response, which in turn requires the request to have been completed, which is sort of a chicken-and-egg problem.

Note that if wget is redirected after the POST request is completed, it will not send the POST data to the redirected URL. Because URLs that process POST often respond with a redirection to a regular page, which does not desire or accept POST. It is not completely clear that this behavior is optimal; if it doesn’t work out, it might be changed in the future.

This example shows how to log to a server using POST and then proceed to download the desired pages, presumably only accessible to authorized users. First, we log in to the server, which can be done only once.

And then we grab the page (or pages) we care about:

If the server is using session cookies to track user authentication, the above will not work because —save-cookies will not save them (and neither will browsers) and the cookies.txt file will be empty. In that case use —keep-session-cookies along with —save-cookies to force saving of session cookies.

This option is useful for some file-downloading CGI programs that use «Content-Disposition» headers to describe what the name of a downloaded file should be.

Use of this option is not recommended, and is intended only to support some few obscure servers, which never send HTTP authentication challenges, but accept unsolicited auth info, say, in addition to form-based authentication.

HTTPS (SSL/TLS) options

To support encrypted HTTP (HTTPS) downloads, wget must be compiled with an external SSL library, currently OpenSSL. If wget is compiled without SSL support, none of these options are available.

| —secure-protocol=protocol | Choose the secure protocol to be used. Legal values are auto, SSLv2, SSLv3, and TLSv1. If auto is used, the SSL library is given the liberty of choosing the appropriate protocol automatically, which is achieved by sending an SSLv2 greeting and announcing support for SSLv3 and TLSv1, which the default. Specifying SSLv2, SSLv3, or TLSv1 forces the use of the corresponding protocol. This option is useful when talking to old and buggy SSL server implementations that make it hard for OpenSSL to choose the correct protocol version. Fortunately, such servers are quite rare. |

| —no-check-certificate | Don’t check the server certificate against the available certificate authorities. Also, don’t require the URL hostname to match the common name presented by the certificate. As of wget 1.10, the default is to verify the server’s certificate against the recognized certificate authorities, breaking the SSL handshake and aborting the download if the verification fails. Although this provides more secure downloads, it does break interoperability with some sites that worked with previous wget versions, particularly those using self-signed, expired, or otherwise invalid certificates. This option forces an «insecure» mode of operation that turns the certificate verification errors into warnings and allows you to proceed. If you encounter «certificate verification» errors or ones saying that «common name doesn’t match requested hostname», you can use this option to bypass the verification and proceed with the download. Only use this option if you are otherwise convinced of the site’s authenticity, or if you really don’t care about the validity of its certificate. It is almost always a bad idea not to check the certificates when transmitting confidential or important data. |

| —certificate=file | Use the client certificate stored in file. This information is needed for servers that are configured to require certificates from the clients that connect to them. Normally a certificate is not required and this switch is optional. |

| —certificate-type=type | Specify the type of the client certificate. Legal values are PEM (assumed by default) and DER, also known as ASN1. |

| —private-key=file | Read the private key from file. This option allows you to provide the private key in a file separate from the certificate. |

| —private-key-type=type | Specify the type of the private key. Accepted values are PEM (the default) and DER. |

| —ca-certificate=file | Use file as the file with the bundle of certificate authorities («CA») to verify the peers. The certificates must be in PEM format. Without this option wget looks for CA certificates at the system-specified locations, chosen at OpenSSL installation time. |

| —ca-directory=directory | Specifies directory containing CA certificates in PEM format. Each file contains one CA certificate, and the file name is based on a hash value derived from the certificate. This is achieved by processing a certificate directory with the «c_rehash» utility supplied with OpenSSL. Using —ca-directory is more efficient than —ca-certificate when many certificates are installed because it allows Wget to fetch certificates on demand. Without this option wget looks for CA certificates at the system-specified locations, chosen at OpenSSL installation time. |

| —random-file=file | Use file as the source of random data for seeding the pseudorandom number generator on systems without /dev/random. On such systems the SSL library needs an external source of randomness to initialize. Randomness may be provided by EGD (see —egd-file below) or read from an external source specified by the user. If this option is not specified, wget looks for random data in $RANDFILE or, if that is unset, in $HOME/.rnd. If none of those are available, it is likely that SSL encryption will not be usable. If you’re getting the «Could not seed OpenSSL PRNG; disabling SSL.» error, you should provide random data using some of the methods described above. |

| —egd-file=file | Use file as the EGD socket. EGD stands for Entropy Gathering Daemon, a user-space program that collects data from various unpredictable system sources and makes it available to other programs that might need it. Encryption software, such as the SSL library, needs sources of non-repeating randomness to seed the random number generator used to produce cryptographically strong keys. OpenSSL allows the user to specify his own source of entropy using the «RAND_FILE» environment variable. If this variable is unset, or if the specified file does not produce enough randomness, OpenSSL will read random data from EGD socket specified using this option. If this option is not specified (and the equivalent startup command is not used), EGD is never contacted. EGD is not needed on modern Unix systems that support /dev/random. |

FTP options

| —ftp-user=user, —ftp-password=password | Specify the username user and password on an FTP server. Without this, or the corresponding startup option, the password defaults to [email protected], normally used for anonymous FTP. |

Another way to specify username and password is in the URL itself. Either method reveals your password to anyone who bothers to run ps. To prevent the passwords from being seen, store them in .wgetrc or .netrc, and make sure to protect those files from other users with chmod. If the passwords are important, do not leave them lying in those files either; edit the files and delete them after wget has started the download.

Note that even though wget writes to a known file name for this file, this is not a security hole in the scenario of a user making .listing a symbolic link to /etc/passwd or something and asking root to run wget in his or her directory. Depending on the options used, either wget will refuse to write to .listing, making the globbing/recursion/time-stamping operation fail, or the symbolic link will be deleted and replaced with the actual .listing file, or the listing will be written to a .listing.number file.

Even though this situation isn’t a problem, though, root should never run wget in a non-trusted user’s directory. A user could do something as simple as linking index.html to /etc/passwd and asking root to run wget with -N or -r so the file will be overwritten.

By default, globbing will be turned on if the URL contains a globbing character. This option may be used to turn globbing on or off permanently.

You may have to quote the URL to protect it from being expanded by your shell. Globbing makes wget look for a directory listing, which is system-specific. This is why it currently works only with Unix FTP servers (and the ones emulating Unix ls output).

If the machine is connected to the Internet directly, both passive and active FTP should work equally well. Behind most firewall and NAT configurations passive FTP has a better chance of working. However, in some rare firewall configurations, active FTP actually works when passive FTP doesn’t. If you suspect this to be the case, use this option, or set «passive_ftp=off» in your init file.

When —retr-symlinks is specified, however, symbolic links are traversed and the pointed-to files are retrieved. At this time, this option does not cause wget to traverse symlinks to directories and recurse through them, but in the future it should be enhanced to do this.

Note that when retrieving a file (not a directory) because it was specified on the command-line, rather than because it was recursed to, this option has no effect. Symbolic links are always traversed in this case.

Recursive retrieval options

| -r, —recursive | Turn on recursive retrieving. |

| -l depth, —level=depth | Specify recursion maximum depth level depth. The default maximum depth is 5. |

| —delete-after | This option tells wget to delete every single file it downloads, after having done so. It is useful for pre-fetching popular pages through a proxy, e.g.: |

The -r option is to retrieve recursively, and -nd to not create directories.

Note that —delete-after deletes files on the local machine. It does not issue the DELE FTP command to remote FTP sites, for instance. Also, note that when —delete-after is specified, —convert-links is ignored, so .orig files are not created in the first place.

1. The links to files that have been downloaded by wget will be changed to refer to the file they point to as a relative link. Example: if the downloaded file /foo/doc.html links to /bar/img.gif, also downloaded, then the link in doc.html will be modified to point to ../bar/img.gif. This kind of transformation works reliably for arbitrary combinations of directories.

2. The links to files that have not been downloaded by wget will be changed to include hostname and absolute path of the location they point to. Example: if the downloaded file /foo/doc.html links to /bar/img.gif (or to ../bar/img.gif), then the link in doc.html will be modified to point to http://hostname/bar/img.gif.

Because of this, local browsing works reliably: if a linked file was downloaded, the link will refer to its local name; if it was not downloaded, the link will refer to its full Internet address rather than presenting a broken link. The fact that the former links are converted to relative links ensures that you can move the downloaded hierarchy to another directory.

Note that only at the end of the download can wget know which links have been downloaded. Because of that, the work done by -k will be performed at the end of all the downloads.

For instance, say document 1.html contains an tag referencing 1.gif and an tag pointing to external document 2.html. Say that 2.html is similar but that its image is 2.gif and it links to 3.html. Say this continues up to some arbitrarily high number.

If one executes the command:

then 1.html, 1.gif, 2.html, 2.gif, and 3.html will be downloaded. As you can see, 3.html is without its requisite 3.gif because wget is counting the number of hops (up to 2) away from 1.html to determine where to stop the recursion. However, with this command:

all the above files and 3.html‘s requisite 3.gif will be downloaded. Similarly,

will cause 1.html, 1.gif, 2.html, and 2.gif to be downloaded. One might think that:

would download just 1.html and 1.gif, but unfortunately this is not the case, because -l 0 is equivalent to -l inf; that is, infinite recursion. To download a single HTML page (or a handful of them, all specified on the command-line or in a -i URL input file) and its (or their) requisites, leave off -r and -l:

Note that wget will behave as if -r had been specified, but only that single page and its requisites will be downloaded. Links from that page to external documents will not be followed. Actually, to download a single page and all its requisites (even if they exist on separate websites), and make sure the lot displays properly locally, this author likes to use a few options in addition to -p:

To finish off this topic, it’s worth knowing that wget‘s idea of an external document link is any URL specified in an tag, an tag, or a tag other than» .

According to specifications, HTML comments are expressed as SGML declarations. Declaration is special markup that begins with , such as , that may contain comments between a pair of — delimiters. HTML comments are «empty declarations», SGML declarations without any non-comment text. Therefore, is a valid comment, and so is , but is not.

On the other hand, most HTML writers don’t perceive comments as anything other than text delimited with , which is not quite the same. For example, something like works as a valid comment as long as the number of dashes is a multiple of four. If not, the comment technically lasts until the next —, which may be at the other end of the document. Because of this, many popular browsers completely ignore the specification and implement what users have come to expect: comments delimited with .

Until version 1.9, wget interpreted comments strictly, which resulted in missing links in many web pages that displayed fine in browsers, but had the misfortune of containing non-compliant comments. Beginning with version 1.9, wget has joined the ranks of clients that implements «naïve» comments, terminating each comment at the first occurrence of —>.

If, for whatever reason, you want strict comment parsing, use this option to turn it on.

Recursive accept/reject options

| -A acclist, —accept acclist; -R rejlist, —reject rejlist | Specify comma-separated lists of file name suffixes or patterns to accept or reject. Note that if any of the wildcard characters, *, ?, [ or ], appear in an element of acclist or rejlist, it will be treated as a pattern, rather than a suffix. |

| -D domain-list, —domains=domain-list | Set domains to be followed. domain-list is a comma-separated list of domains. Note that it does not turn on -H. |

| —exclude-domains domain-list | Specify the domains that are not to be followed. |

| —follow-ftp | Follow FTP links from HTML documents. Without this option, wget will ignore all the FTP links. |

| —follow-tags=list | wget has an internal table of HTML tag/attribute pairs that it considers when looking for linked documents during a recursive retrieval. If a user wants only a subset of those tags to be considered, however, he or she should be specify such tags in a comma-separated list with this option. |

| —ignore-tags=list | This option is the opposite of the —follow-tags option. To skip certain HTML tags when recursively looking for documents to download, specify them in a comma-separated list. |

In the past, this option was the best bet for downloading a single page and its requisites, using a command-line like:

However, the author of this option came across a page with tags like » » and came to the realization that specifying tags to ignore was not enough. One can’t just tell wget to ignore » «, because then stylesheets will not be downloaded. Now the best bet for downloading a single page and its requisites is the dedicated —page-requisites option. —ignore-case Ignore case when matching files and directories. This influences the behavior of -R, -A, -I, and -X options, as well as globbing implemented when downloading from FTP sites. For example, with this option, -A *.txt will match file1.txt, but also file2.TXT, file3.TxT, and so on. -H—span-hosts Enable spanning across hosts when doing recursive retrieving. -L—relative Follow relative links only. Useful for retrieving a specific homepage without any distractions, not even those from the same hosts. -I list,

—include-directories=listSpecify a comma-separated list of directories you want to follow when downloading. Elements of list may contain wildcards. -X list,

—exclude-directories=listSpecify a comma-separated list of directories you want to exclude from download. Elements of list may contain wildcards. -np, —no-parent Do not ever ascend to the parent directory when retrieving recursively. This option is a useful option, since it guarantees that only the files below a certain hierarchy will be downloaded. Files

/etc/wgetrc Default location of the global startup file. .wgetrc User startup file. Examples

Download the default homepage file (index.htm) from www.computerhope.com. The file will be saved to the working directory.

Download the file archive.zip from www.example.org, and limit bandwidth usage of the download to 200k/s.

Download archive.zip from example.org, and if a partial download exists in the current directory, resume the download where it left off.

Download archive.zip in the background, returning you to the command prompt in the interim.

Uses «web spider» mode to check if a remote file exists. Output will resemble the following:

Download a complete mirror of the website www.example.org to the folder ./example-mirror for local viewing.

Stop downloading archive.zip once five megabytes have been successfully transferred. This transfer can then later be resumed using the -c option.

Related commands

curl — Transfer data to or from a server.

Источник